Amazon performs more than 10.000 A/B testing every year, do you know why? The reason is simple: The correct use of tests can increase your conversion rate by 300! Who wouldn’t want to improve their conversion rate as much? Almost everyone would, but the reality is quite different: Only 44% of companies use A/B testing when trying to optimize their conversion rate. Therefore, the majority of companies are not using it yet and if you are one of them, it might be time to include it in your marketing strategies in order to be one step ahead of the competition. The principle is actually quite simple, you test two or more different versions of a web page to determine the best performing combination that generates traffic or conversion. There is no limit to the number of tests you can perform, which allows you to optimize all the different elements that make up your web pages or the emails you send. In order for you to understand this concept, I have created this practical guide that explains what A/B testing is. I have also used case studies as well as the steps and tools you can use to perform your tests efficiently.

A/B Testing campaign

I explain in this video why AB testing is important:

Here is the PPT in which I present you why AB testing is important:

What is A/B testing or A/B testing?

A/B testing is a marketing approach that consists in testing several variants of a web page in order to determine which one performs best.

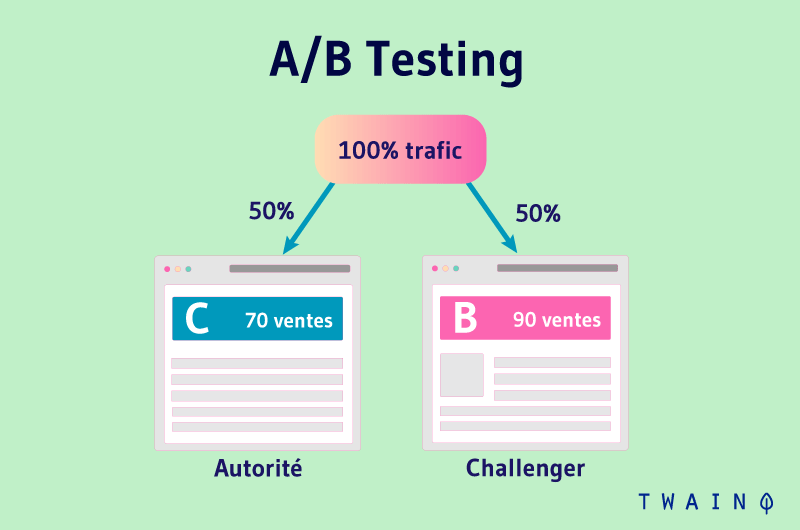

It often tests the performance of two different versions of the same web page web page with the same amount of randomly distributed traffic. In other words, you will show version A of a marketing content to one half of your audience and version B to another.

To perform A/B testing, you create two different versions of a web page or email with usually only one variable changed. Then, you show the two versions to two similar-sized audiences to evaluate which one generates more traffic, clicks or conversions over a given period of time.

In this, A/B testing can help you evaluate how a given version of your content performs compared to another version. For example, let’s say you want to change the position of your call-to-action or CTA button by moving it to the center of your landing page instead of the left.

To perform this test, you will create another alternative web page that will feature the position change you want. The already existing web page A is considered the “control version” and B is the “treatment page” or the “challenger”.

After designing page B, you will move on to the testing phase by directing a predetermined percentage of visitors to each of them. Ideally, it’s most effective to set the same percentage of visitors for both versions

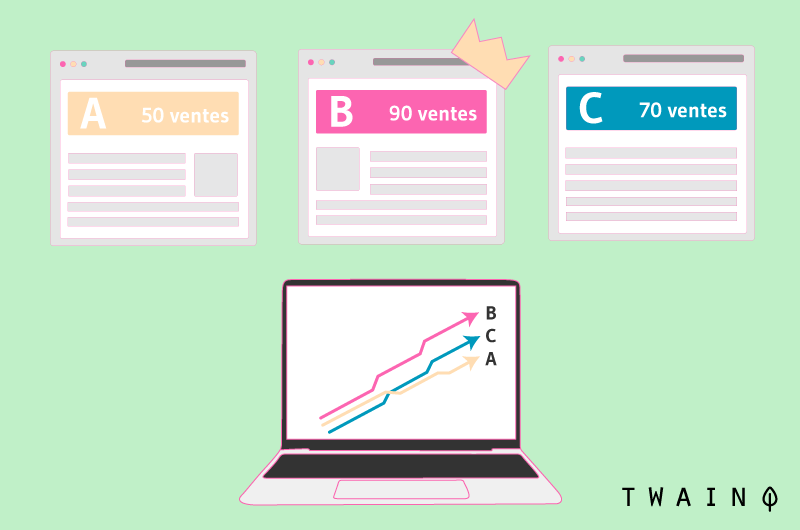

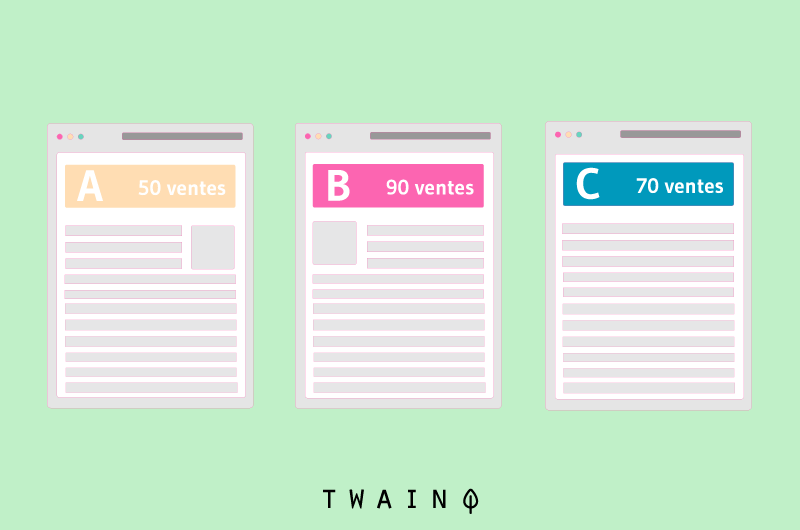

That said, you are not limited to only two different versions to test. You can compare the performance of as many pages as you want with the different types of A/B testing.

The different types of A/B testing: A/A, Split, A/B/n & Multivariate

There are several types of A/B tests and you don’t have to limit yourself to comparing two versions of the same web page.

The A/A test: Test the accuracy of the tools

Unlike A/B testing, which compares two versions of the same web page, A/A testing uses the same version to check the accuracy of an A/B testing tool.

If you are not convinced of the reliability of a testing toolif you are not convinced of the reliability of a testing tool, you can simply use this type of test to verify its accuracy and reliability.

Split testing: For different URLs

While A/B testing uses versions of the same page created from the editor of a testing software, split testing allows you to compare two different versions of a page hosted on different URLs.

Therefore, the visitor is redirected to another page in split testing, while he stays on the same page in A/B testing.

Multivariate testing and A/B/n testing

A/B testing allows you to test only two versions of a page based on a single variable, but when you want to test several pages or variables at the same time, you should use multivariate or A/B/n testing.

A/B/n testing allows you to test multiple versions of the same page simultaneously, still using a single variable. For example, you can change the color of your CTA button for version B of your test and change an image for version C without affecting the CTA. Thus, only one variable changes from one version to another compared to version A.

Multivariate testing works almost like A/B/n testing, except that it tests the variations together instead of one by one. In fact, this type of testing allows you to determine which of your different variable combinations performs best.

For example, if you have four different versions to test, you might have the following changes:

- A: Original ;

- B: Change the color of the CTA button;

- C: Change the image without touching the CTA;

- D: Change the color of the CTA and the image.

Then you have the following combinations:

- A: Original image + original CTA color;

- B: Original image + new CTA color;

- C: New image + original CTA color;

- D: New image + new CTA color

It is important to note that if you add several variables or page versions, you will end up with a large number of pages to test. This may make your analysis inefficientespecially if you are not yet an expert in using this approach.

5 reasons why you should do A/B testing in 2019

A/B testing has many advantages and its use allows you to test a variety of variables to optimize your overall performance.

1. A/B testing: A very inexpensive and profitable approach

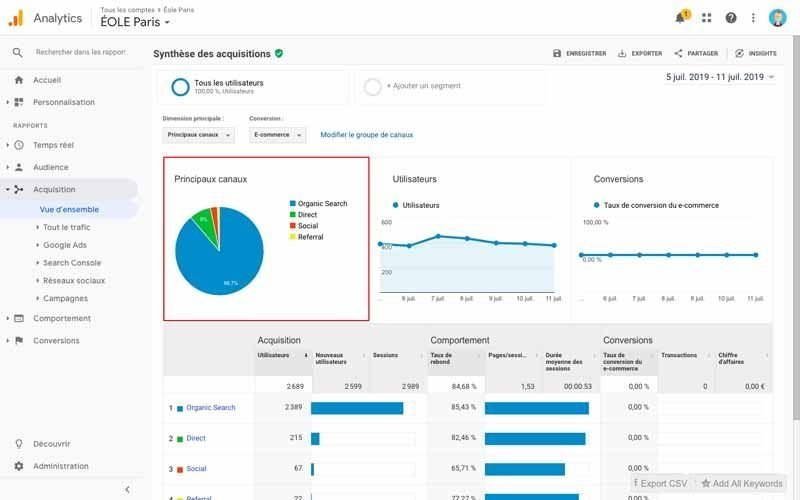

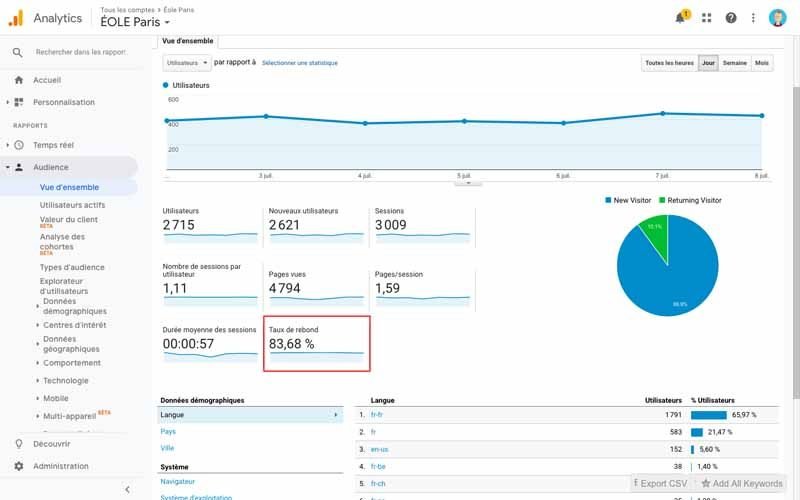

A/B testing is inexpensive because you can use free tools such as Google Analytics to automatically perform your tests. Moreover, they are very cost-effective which is why you should adopt them.

Let’s say you want to use a free solution for your test and you employ an editor that you pay €2,000 per month. This editor publishes five articles per week, which is €100 per piece of content created.

Now, if on average one blog post allows you to generate 10 leads, that means that to generate 10 leads, you spend about €100. But in order to improve this figure, you decide to make some changes in your content, i.e. to add infographics in your content. To evaluate the impact of this change, you use A/B testing with Google Analytics.

You then ask the editor to spend two days to create two variations of the same content. This implies that you will lose 100 € and probably 10 leads since you will end up with only one piece of content over the two days instead of two different pieces of content over the same period of time.

But in the case where the addition of infographics in your content performs really well to the point of doubling your leads, you will not have lost anything as an investment. On the contrary, each of your next contents could generate 20 leads instead of 10. So, for the same €100, you will generate twice as many leads. Who wouldn’t want to take advantage of this?

However, it may happen that your test fails, but you have the opportunity to do better by learning from your results to have consistent results in the future.

Better yet, you can make further improvements to go from 20 to 30, 40, 60 leads for every 100 € of content created. So this is a approach that can be very cost effective even when you use paid tools.

Apart from cost and profitability, A/B testing allows many marketers to achieve several goals.

2. Increase website traffic

A/B testing can allow you to test different title formulas for your pages or meta description to change the number of people who click on these different elements to get to your website. By finding the right formula, you can simply increase the traffic to your website.

3. Higher conversion rate

Testing different locations, colors or even the anchor text on your CTAs can allow you to change the number of people who click on those CTAs to get to a landing page. This increase means that the number of people who will fill out your forms or anything else will be improved. As a result, you will either have many more leads or customers.

4. Lower bounce rate

If your bounce rate is high or a lot of visitors are leaving your web pages quickly after visiting them, you can use A/B testing to try to improve things.

For example, you can:

- Try a different introduction format;

- Use other visual formats;

- Change the font or size of your writing;

- Change the design of the page itself;

- Etc..

These different actions followed by testing, can allow you to reduce your bounce rate and retain many more visitors.

5. Decrease the number of shopping cart abandonment

According to MightyCalle-commerce companies see 40-75% of customers leaving their website with items in their cart. With this in mind, you can reduce this abandonment rate by testing:

- Different product photos;

- The design of shopping pages;

- The presentation of the shipping costs ;

- Etc….

There is no doubt that the use of A/B testing is very important. Let’s move on to the implementation stage.

When do you know you need to perform A/B testing?

There is only one answer to this question You should always and continually perform A/B testing. By now you know the many advantages of this technique and it would be wise to use it to stay ahead of the competition.

However, your results will be more accurate if you have a business that is already established rather than a business that is just starting up. In fact, an established business is already generating targeted traffic and qualified leads, so the results it gets are consistent with the target market

This doesn’t mean that A/B testing is useless for a new business. It just means you may get less accurate and relevant results.

3 A/B testing case studies to inspire you

Let’s take a look at three A/B testing examples to see how other companies have successfully improved their performance.

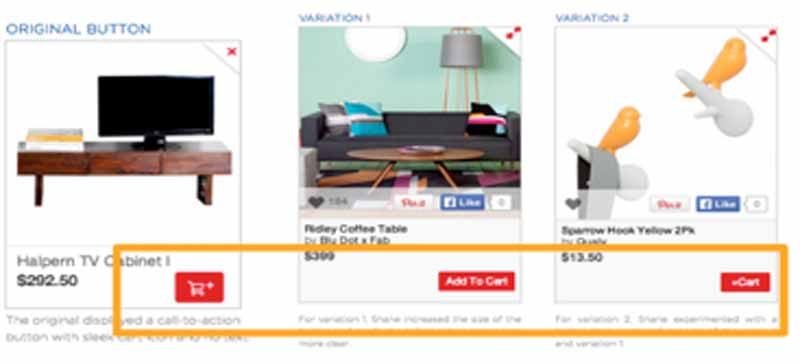

Case Study 1: 49% increase in CTR by adding text to the call-to-action button

This example is one of the case studies presented by AB Tasty. The A/B test was performed by Fab which is an online community whose members have the possibility to sell or buy :

- Clothing ;

- Accessories ;

- Collectibles; Household items; and

- Household items;

- Etc..

The objective of the test

The objective of the test was to make the “Add to Cart” button clearer by adding text in order to increase the number of people who add items to their shopping cart.

The result

After the test, there was a 49% increase in click-through rate compared to the original. This shows that adding the “Add to Cart” text performs better than having a symbol.

Image source https://www.abtasty.com/blog/learn-from-5-ab-test-case-studies/

In the image, you will see:

- On the far left: The original image which has a small shopping cart with a “+” sign, but without text ;

- Center and right: These are two versions that were part of the test and have text. The first one, i.e. the one in the center, increased additions to the shopping cart by 49% compared to the original.

Lesson learned

Visitors interact better with textual content than images or symbols that can confuse them. Therefore, try to have a direct and clear CTA that will help your visitors automatically know what the button does. You will agree with me that it makes no sense to have a CTA that your visitors don’t understand.

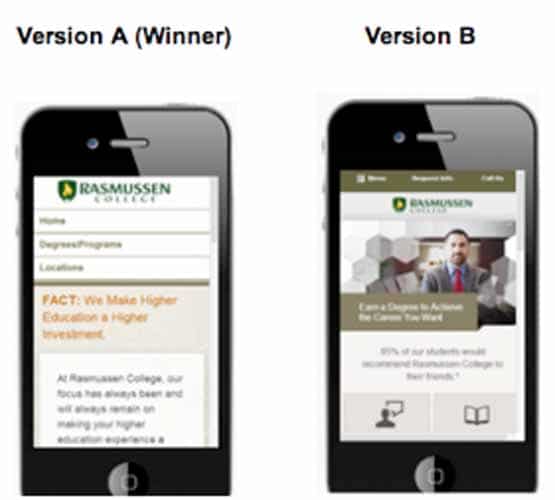

Case study 2: 256% increase in leads after using a mobile-optimized landing page

AB Tasty also gives this example of a test performed by Rasmussen College. This is a private for-profit college that wanted to increase the number of leads through paid traffic on their mobile site.

The objective of the test

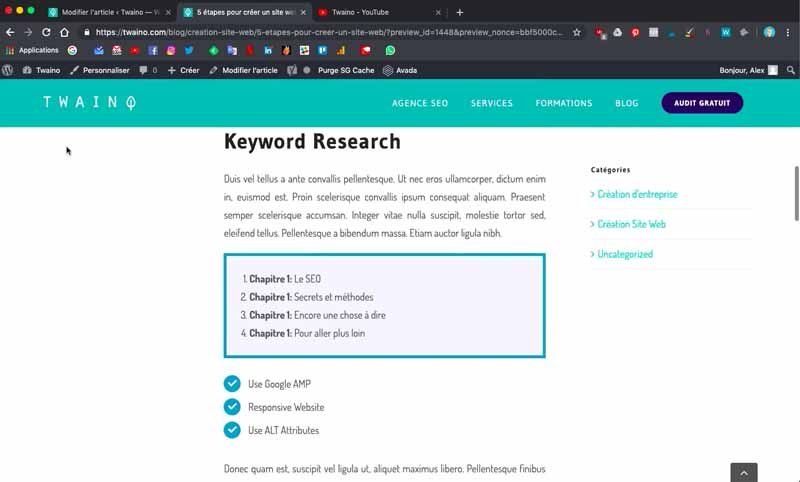

The objective of the test was to create a new mobile-friendly website with a clickable menu to improve conversions.

The result

Conversions increased by 256% after the implementation of a new phone-friendly website.

Image source https://www.abtasty.com/blog/learn-from-5-ab-test-case-studies/

Lesson learned

57 % of consumers will not recommend your business if your mobile website is poorly designed and 48% will think you don’t care about them. In view of these figures, it is very important to make your responsive website if you haven’t already done so.

In fact, it’s essential if you don’t want to lose customers just because your site doesn’t look right.

Case Study 3: Increasing CTR by 433% through image and text change

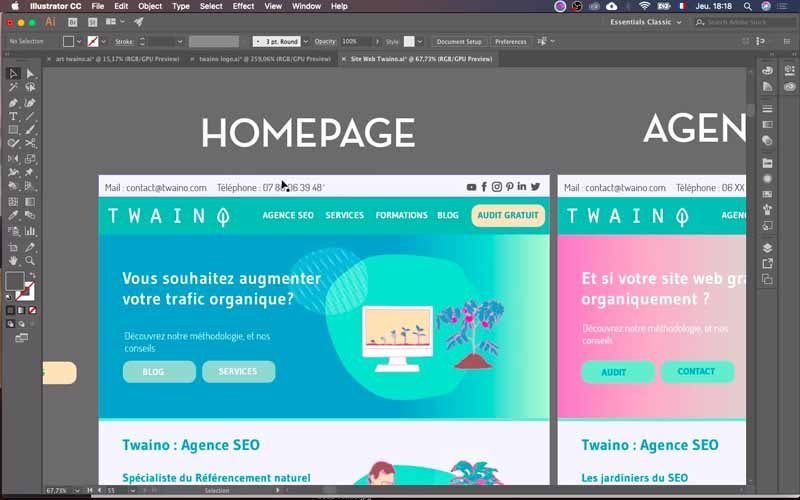

This case study is given by Crazyegg and concerns an A/B test performed by the insurance company Humana. In fact, the company wanted to generate more interaction with their banner and so they conducted an A/B test with some modifications.

The objective of the test

Humana wanted to increase its click-through rate on the banner by making simple text and image changes.

Source https://www.designforfounders.com/ab-testing-examples/

To do this, Humana decreased the amount of text and changed the image that was present on its original banner. In addition, the CTA was changed from “Shop 2014 Medicare Plans” to “Get Started Now” with a different button and color.

Result

Simply reducing the text and changing the image resulted in a 433% increase in click-through rate. After changing the CTA text, Humana saw another 192% increase.

Lesson learned

Simplicity is a very important factor in marketing. In fact, when you have a product to offer, you want to highlight its amazing features or benefits, but consumers don’t necessarily want this information especially in all places

This A/B test example proves that people often respond to reduced text and simpler CTAs. This doesn’t mean you shouldn’t talk about your product, you can dedicate a whole page to it. This is a very important concept and I recommend you to check my article on the sales funnel to know when and where to talk about your products’ features.

We’ve just seen three case studies that can inspire you to do your own test. So let’s move on to the different steps for conducting your tests.

15 simple steps to perform an A/B test

To run a successful A/B testing campaign, there are actions to perform before, during and after the test.

Before the A/B test: Checklist of things to do

1. Choose a variable to test

Many factors come into play when you want to optimize a web page or an online campaign. But to evaluate the effectiveness of a change, it is wise to isolate a single variable to measure its performance.

Otherwise, you won’t know for sure which element is responsible for the observed changes. Of course, you can test more than one variable for a single web page or email, but be sure to test them one at a time.

Here are nine variables you can include in an A/B test:

1.1. The color of your buttons

The color of a call-to-action button can have an impact on conversion or visitor interaction, and this is based on how the color relates to the rest of the web page. Test a few colors, and see which color elicits the most action from visitors.

1.2. The titles of your pages

Changing a few words in your page title could also have a major impact on your performance, especially in terms of click-through rate. For this, don’t hesitate to test different titles on your page, especially on your landing pages to see which title attracts the most prospects.

On this subject, you can consult my guide on how to create catchy titles.

1.3. Images in your content

What image sizes should you use? Should you use original images like I do, or classic images? Vwo believes, based on several researches, that the picture of a person on a landing page has a positive impact and increases the conversion rate. Don’t you think it would be interesting to also test this kind of image on your landing pages?

1.4. Text positioning

Do you have a main text that is catchy enough to encourage your visitors to take an action? If so, where should you place it for maximum impact?

Also, if you can’t decide which main text to use, simple A/B testing can help you determine this.

1.5. The position of the CTAs

Where should a call to action be placed on your web page to better direct visitors to the page you want? Obviously, you will place it in a place so that it is automatically visible to visitors. But should it be on the left, center, right, top or bottom? To answer this question, there is no other choice than to conduct an A/B test.

1.6. Layout of your landing pages

Try to test different layouts in order to optimize the user experience. Try one column, two columns, or a given color mix in order to end up with landings pages that allow you to convert a significant number of your visitors.

1.7. number of form fields

Wouldn’t it be great if you could increase the number of conversions from your web pages simply by removing a field from your forms? Or maybe you think it would be a good idea to add another field to your form.

Run an A/B test to see if this negatively or positively affects your conversion rate.

1.8. Your different offers

Depending on the context of the page, visitors will not have the same interactions with your offers. For example, if you offer a free trial of a tool in your blog content, the effect will be different if it’s free ebooks.

Perhaps it would be more effective to put the free trials on your product pages and the free ebooks in your blog posts.

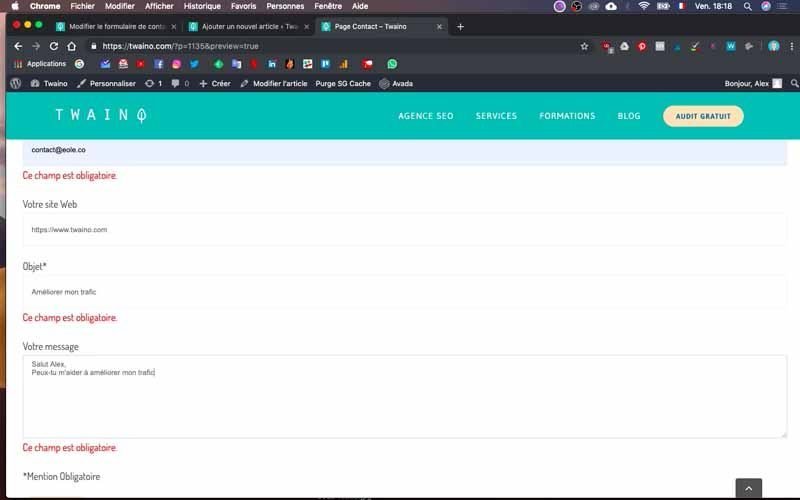

1.9. The emails

What subject line generates the most open rate for your emails? Does including the first or last name of your prospects help you get more conversions? You can test all these different aspects to optimize your performance.

1.10. Pricing

It’s not about presenting your visitors with different prices in an A/B test. You can change the way you display them and I invite you to consult this resource from Convertize which shows the best ways to display prices. Learn about it and test it so you have the best options.

In summary, look at the different aspects of your marketing strategies and their possible alternatives in terms of:

- Design;

- Wording;

- Layout.

Keep in mind that even simple changes, like changing the image in your email or the words on your call-to-action button, can have a big impact on your performance.

However, sometimes it may make more sense to test multiple variables simultaneously rather than just one. It depends on your goals, but it’s important not to overdo it to avoid skewing your analytics.

2. Determine your objective

Although a single A/B test will give you a certain amount of data, it is important to choose a parameter that you will focus on. For example, if you want to change the title of your page, which metric do you want to look at, is it traffic or conversion on the page?

The answer to this question allows you to define a goal or the outcome you want. You can then formulate a hypothesis and look at your results based on that prediction

In other words, you will consider how the changes you make will affect users. For example, you may assume that changing your page title will double the traffic to your page.

This step is important because it allows you to design the second version of your page so that the variable you want to evaluate is highlighted.

In fact, if you wait until the end before thinking about which metrics are most important to you, what your goals are, and how your proposed changes might affect user behavior, your test may become ineffective.

3. Create an “authority” and a “challenger” version

After the previous steps, use the information to set up the different versions you want to test. The “authority” version is nothing more than the web page that already exists and that you use.

Based on this version, you will build the “challenger” version of what you want to test. For example, if you’re wondering if including a testimonial on a homepage would make a difference, set up your control page without testimonials and then create your variant with a testimonial.

4. Distribute your sample groups equally and randomly

For tests where you have more control over the audience, such as with emails, it is recommended to run the test with two or more different audiences. However, these must be equal in order to get conclusive results.

You should know that this process differs depending on the tool you use for your A/B testing. Most tools, however, allow you to automatically split the traffic between the different versions you have so that each version receives a random sample of visitors.

5. Determine your sample size

To determine the size of your sample, you will take into account not only the tool you are using but also the type of A/B test you have chosen.

In case your test applies to a mailing campaign, you will probably send your different contents to a smaller part of your list to get statistically significant results. Then, you will choose the best performing option to send to the rest of the list.

Some tools allow you to perform A/B testing with an automatic split of your sample size, i.e. 50/50. This feature doesn’t work for all parameters and most of the time you need to provide a list of at least 1000 recipients.

However, if you are testing something that does not have a finite audience, such as a web page, the time is the factor that will directly affect your sample size. In fact, you will need to let your test run long enough to get a significant amount of traffic, otherwise it will be hard to tell if there is a significant difference between your two versions.

6. Decide how important your results are

Once you’ve defined your goals, think about how important your results should be to justify choosing one version over another. In other words, answer the question Are the results you have relevant enough to make a decision ?

The statistical significance is an important concept in A/B testing and it is wise to master it. You can check out this resource from HubSpot to understand the concept of statistical significance.

Basically, the higher the percentage of your confidence level, the more confident you can be about your results. Generally, a minimum level of 95% is required to approve the relevance of the results. However, sometimes it is wise to use a lower confidence level especially if you do not need the test to be very rigorous.

HubSpot says it’s easier to think of statistical significance as a gamble. For example, you can state that “I’m 90% sure this is the right color and I’m willing to bet everything on it.” This statement is the same when you want to use a significance of 90% and then declare a winner on that basis.

On the other hand, it is recommended that you use a higher confidence level for parameters that only slightly improve your results. For example, if a major variable such as changing your design is likely to increase your conversion rate by 10 or 15%, it is wise to have a low confidence level. On the other hand, if it is a change in the color of a CTA and your conversion rate will be very slightly improved, on the order of 1% or less, it is more useful to have a high confidence threshold.

In summary, you need to be more scientific if the change is specific since it will have very little noticeable impact and if the change is radical with more impact, you can be less scientific.

7. One test at a time for each campaign

Testing more than one thing at a time for a single campaign can complicate your results. For example, if you test a variable on a landing page and you also test an email campaign that directs to that same page, how can you know exactly which parameter caused the increase in leads?

During A/B testing: Use tools for your tests

8. Use an A/B testing tool

To perform A/B testing on your website or in an email, you’ll need to use software that will allow you to automatically collect the data. You can use for example Google Analytics which allows you to test up to 10 versions of the same web page and compare their performance using a random sample of visitors. This free solution is not the only one and you have more than ten tools at your disposal.

8.1. VWO

VWO is an A/B testing platform used by more than 4,500 brands including UBISOFT, eBay, Target and many others. It is a solution specially designed for businesses and allows to perform:

- A/B testing;

- Split URL tests;

- Multivariate tests.

In addition, VWO offers multiple features to measure the performance of your tests. Besides, the tool offers you a SmartStats feature that leverages statistics to help you:

- Run tests faster;

- Better control your tests;

- Draw more accurate conclusions.

8.2. Optimizely

Optimizely is a digital experimentation platform used by 24 of the top 100 wealthiest companies. With its powerful experimentation tool, you can run multiple experiments on a single page simultaneously, allowing you to test different variables in your web design.

Optimizely goes further by also offering tests on:

- Advertising campaigns ;

- Dynamic websites;

- Geography;

- Various parameters such as device, browser…

- Etc..

8.3. AB Tasty

Used by major brands such as L’Oreal, Sephora, USA Today and many others, AB Tasty is a conversion rate optimization software that offers:

- A/B and multivariate testing;

- Data analysis;

- Marketing and personalization tools.

- With this tool, you can serenely conduct:

- Your A/B testing ;

- Your split testing;

- Your multivariate testing;

- Your sales funnels.

Moreover, AB Tasty’s advanced targeting will allow you to perform your tests according to various criteria such as:

- THE URL ;

- Geolocation

- Weather;

- And so on.

For the validation of your tests, AB Tasty offers reports that display your tests and their confidence level in real time. If you have a medium-sized business, AB Tasty could be a perfect fit for you.

8.4. Crazy Egg

Crazy Egg is a website optimization software that offers tools for:

- A/B testing;

- Heat mapping;

- Usability testing.

Their A/B testing tool allows you to test variations of each page of your website by simply adding a piece of code to the pages you want to test.

In addition to creating tests, Crazy Egg allows you to automatically send more traffic to the optimal variation of your test once it recognizes that it is the winner. In addition, you get other intuitive conversion tracking and reporting tools. Crazy Egg is a tool specially developed for small businesses.

8.5. Omniconvert

Like the other tools, Omniconvert offers an A/B testing tool that comes with other tools:

- Survey;

- Customization ;

- Overlays;

- Segmentation.

Omniconvert can be run on a desktop, tablet or mobile computer. The firm goes one step further by combining its A/B testing tool with its segmentation tool, allowing for the testing of over 40 segmentation parameters such as:

- Traffic source;

- Geolocation;

- Visitor behavior;

- Etc..

This data allows you to improve other elements such as:

- The features of your product ;

- The user experience;

- The ability of the content to convert or engage.

For a medium-sized company, Omniconvert could be a great A/B testing solution.

8.6. Freshmarketer

Freshmarketer is a tool you can integrate with Google Analytics that allows you to test and then validate your results. In addition, you have the ability to track the amount of revenue generated by your experiments.

Besides, its split URL testing tool can help you to:

- Test multiple URL variations;

- Transform winning test variations into real web pages;

- Etc..

Freshmarketer could be your ideal tool if you have a small business.

8.7. Convert

Used by brands such as Sony, Unicef and Jabra, Convert is an A/B testing and web personalization software that offers tools for:

- A/B testing;

- Split testing;

- Multivariate testing;

- Multipage experimentation.

Convert also offers an advanced segmentation tool that allows you to segment users based on:

- Their behavior;

- Their history;

- Cookies;

- JavaScript events.

In addition, Convert can measure the performance of all your tests by reporting on a wide range of metrics, from the click-through rate of your variations to their ROI.

If you want to use Convert in conjunction with your other tools, they offer a ton of integrations with third-party tools:

- WordPress;

- Shopify;

- HubSpot ;

- Etc..

Convert is best suited for small businesses.

8.8. Fivesecondtest

As its name suggests, this tool allows you to know what a visitor of your web design retains in 5 seconds. In other words, you will know with great ease what visitors like or dislike about your website.

8.9. Kameleoon

Used by companies such as Renault, Toyota, L’EQUIPE, Le Monde and many others, Kameleoon is a simple solution that allows you to :

- Perform your A/B testing;

- Segment your audience;

- Personalize your content, your emails, your products…

- Etc..

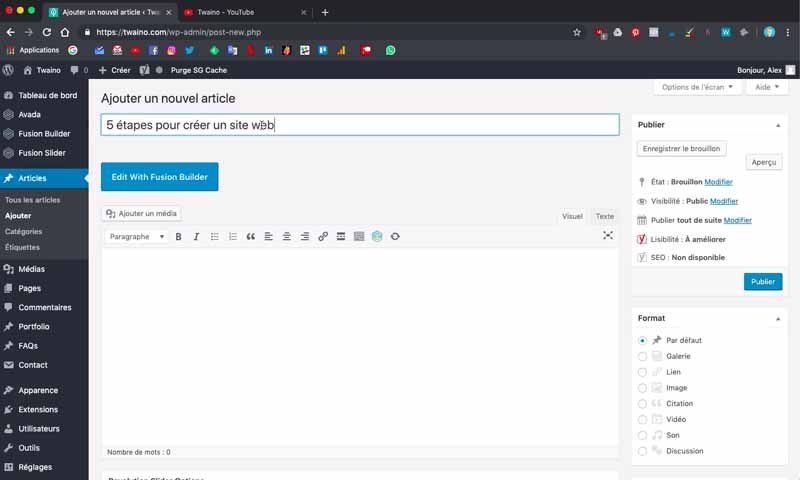

8.10. Nelio A/B Testing for WordPress

If you use the WordPress CMS, Nelio is a powerful solution for :

- E-commerce ;

- Publishers;

- Marketers;

- Nonprofits; Education

- Education;

- Etc….

Nelio is an easy-to-use tool that allows you to run your tests and improve your performance.

8.11. Unbounce

More than 15,000 retailers use Unbounce. This tool has a very simple interface that you can use for your tests and customize your web pages.

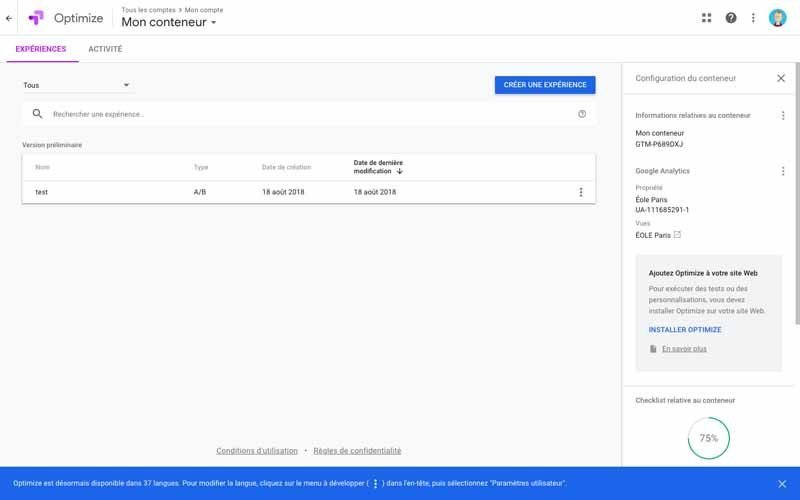

8.12. Google Optimize

The free solution of the Mountain view firm is connected to Google Alanytics and allows you to conduct :

- Your A/B tests ;

- Your multi-variable tests ;

- Your redirection tests ;

- Etc..

In addition to an advanced statistical model, Google Optimize offers sophisticated targeting tools that allow you to offer an experience adapted to your audience’s expectations.

8.13. HubSpot & Kissmetrics A/B Testing Kit

HubSpot offers a free kit with everything you need to run A/B tests:

- An A/B testing tracking template;

- A handy instructional and inspiration guide;

- A statistical significance calculator to see if your tests won, lost or didn’t.

This tool is ideal for companies that are just getting started with A/B testing, or for companies that need a way to track their existing tests.

Note all the kit is in English, if you have difficulties with this language, it would be wise to use another solution.

9. Test both versions simultaneously

Timing is a very important factor in the results of a marketing campaign. If you use version A for one month and version B a month later, how will you know if the evolution of your performance was caused by the changes you made or by the difference of the periods?

For example, when you sell sunglasses and you do the first test in January and the second in February, your analysis may be completely distorted because in February there is a high demand for sunglasses. As a result, you will not know if this demand is the cause of the changes in your performance.

Therefore, test the different versions of your A/B testing simultaneously, otherwise you may doubt your results.

But when you test the timing itself, this is an exception. For example, you can determine the best times to send your emails. Speaking of timing, this is a very interesting factor that you can include in your list of things to test.

10. Take enough time to get useful data

In order for you to have a large sample size to determine significant differences between your two between your two versions, it is very important to give your A/B test enough time.

Getting statistically significant results will vary depending on the size of your business and how you run your test. If your business receives a lot of traffic, maybe a few hours will be enough. But if you don’t have a lot of traffic, you may need a few days or weeks.

At this point, I would suggest you not to do A/B testing if you just launched your website and you have very little traffic. You may not have reliable data, which may distort your analysis.

11. Ask for feedback from real users

A/B testing mainly helps you to see with quantitative data that doesn’t necessarily help you understand why people do certain actions rather than others. While you are running your test, why not collect feedback directly from some of your users?

Conducting a survey is a very effective way to get the opinion of your visitors. To do this, you can add an exit pop-up or a small survey on the thank you page that will ask your visitors the reasons why they did not click on a certain CTA for example.

After the A/B test: Analyze and improve

12. Focus on your goal

Even if you measure several parameters, focus on your main objective when performing your analysis.

For example, if you changed the color of your CTA button and chose conversion as the primary metric to measure, don’t get distracted by the click-through rate.

In fact, the click-through rate may have increased, but yield a low conversion rate. In this case, you will end up choosing the variant with the highest conversion rate even if it means the click-through rate will be low.

13. Measure the importance of your results

With the results you have, you know which variation gives the best results. It’s time to determine if your results are statistically significant or not. In other words, you will determine if these results are enough to warrant a change?

To find out, you will perform a test of statistical significance, as I have discussed in previous chapters. This calculation can be done manually or with an online tool. Of course, the automatic solution is easier and faster since you only have to enter the data you have collected.

You can use the free tool of Neil Patel which allows you to make a quick choice. In fact, the calculator gives you the confidence level your data produces and the winning variation. Then you simply measure that number against the confidence level you chose at the beginning to determine whether to change things or not.

14. Act on your results

When according to the numbers you have one version that is consistently different from the other, you can disable the losing version from your A/B testing tool.

In the event that both versions of your test give similar results, the test is inconclusive. This lets you know that the variable you tested has no impact on your performance. You can simply keep your original version or do another test by choosing another parameter.

Indeed, it is important to note the lessons you learn from each test and use them to refine your next tests.

15. Plan your next A/B test

You’ve just completed an A/B test that helped you discover a new way to make your marketing content more effective. But it would be a shame to stop there, especially since there’s always room for more optimization.

You can do another A/B testing on another feature of the same page toimprove its performance even more.

For example, if you just tested a CTA button on your landing page, why not do another test on the main text of your landing page? Or use a video instead of images?

It’s wise to always be on the lookout for opportunities to optimize the performance of your website or email campaigns.

Conclusion: A/B Testing – A simple and very profitable concept

Maintaining or obtaining a certain visibility on the web, requires nowadays a constant effort in constant effort in the improvement of performance. In fact, a place acquired today in the SERPs, can be quickly lost to the competition if optimizations are not regularly made. To do this, several methods, tools and marketing approaches have been developed, including A/B testing. It is an approach with a very simple principle of very simple operating principle in that if you want to improve your content, you can first conduct tests. Depending on the results you have, you can keep the original version or validate the new version. This avoids making blind changes that penalize your performance instead of improving it. In this logic, A/B testing allows you to constantly make changes that only optimize the performance of your website or your email campaigns. However, you must know how to choose the variables to test in order to have significant results to make relevant decisions.

So, do you want to launch your first A/B testing campaign?