Crawler

Quick Overview : Deep Crawl

DeepCrawl is a crawler that crawls your website to reveal optimal technical performance to help you improve its indexability and user experience for SEO purposes.

Videos

Gallery

Description

Description Deep Crawl

If you need to examine the technical health of your website, Deepcrawl is an explorer that allows you to see how search engines crawl your site.

The tool provides a wide range of information about each page of your website to give you a clear idea of its indexability likelihood.

Let’s find out in this description how Deepcraw works.

What is Deep Crawl ?

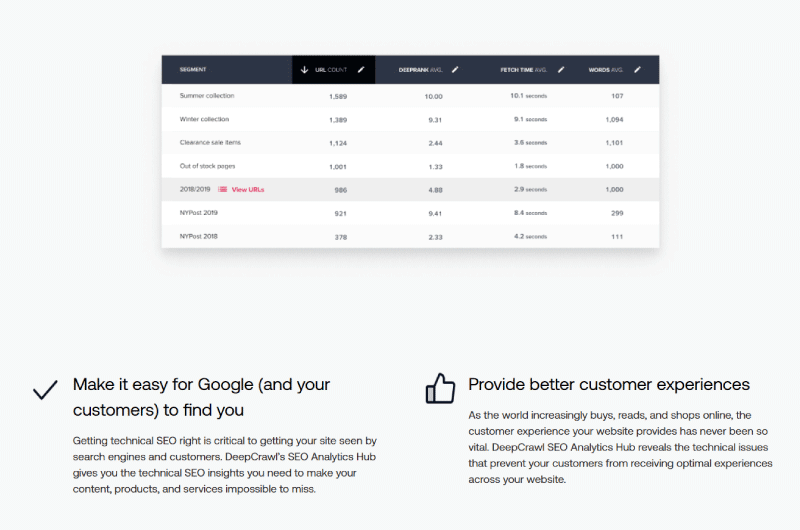

The tool works by subtracting data from different sources like XML sitemaps, search console, or Google analytics.

Thus, it gives you the possibility to link up to 5 sources to make the analysis of your pages relevantly effective.

The goal is to simplify the detection of flaws in your site’s architecture by providing data such as orphan pages generating traffic.

The tool gives you a clear view of your website’s technical health and actionable insights you can use to improve SEO visibility and convert organic traffic into revenue.

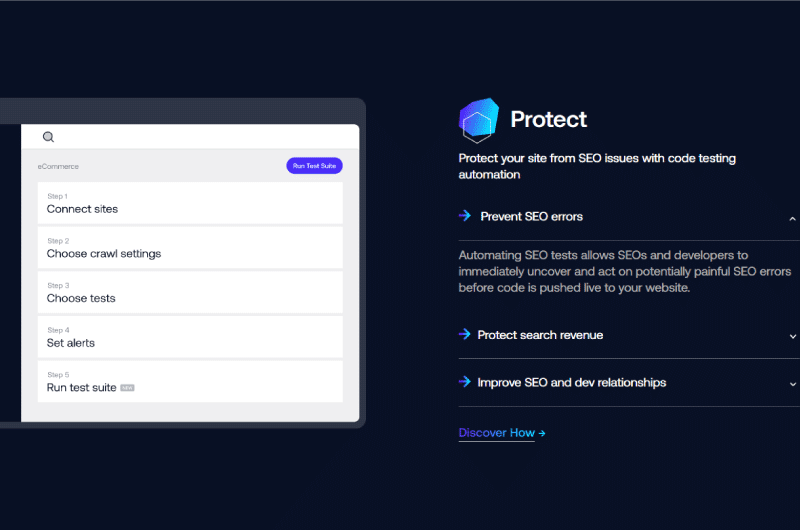

Setting up a website for crawling can be done in four simple steps.

When you sign up for a free trial of Deep Crawl, you are redirected to the project dashboard. The second step is to choose the data sources for exploration.

By following the instructions, you can quickly set up your website and move on to the most important things:

Dashboards

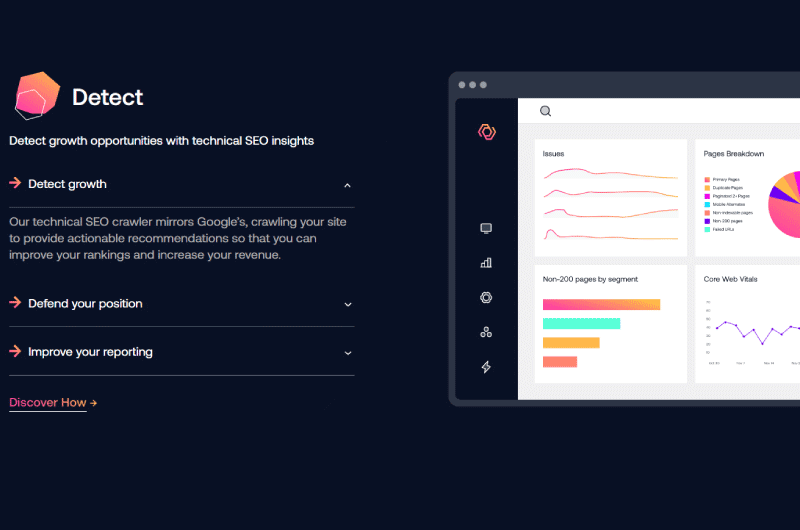

When Deep Crawl finishes crawling an entire website, it displays in a fairly intuitive dashboard a global and precise view of all identified log files.

In a first graph, we can read a complete and quick breakdown of all the requests made by users of mobile devices such as computers.

This is followed by a summary that not only highlights the main issues with crawling the website, but also a list of all the elements that can, in one way or another, prevent the pages from being indexed. of the website.

Deep Crawl subtracts and analyzes all payload data from all sources you linked during setup and combines it with data from log files to better qualify each detected issue.

We observe from this report that many of the URLs listed in the XML sitemaps have not been requested.

And this can be due to a weak linking of the pages between them or a rather important depth of the architecture of the site to the point where it takes a more powerful robot to reach the web pages of the bottom.

Also, when XML sitemaps are deprecated, they no longer reflect active URLs in any way.

In this case, we can deduce that a crawl budget is very important, especially if you have a large website.

Deeper Analysis

The Deeper Analysis section is characterized by two sets of data which are ”Bot Hits” and ”Issues”.

The first set of data called ”Bot Hits” lists the set of requested URLs taking into account not only the total number of requests, but also the devices used to make the requests.

The idea is to help you see which pages crawlers focus on most when crawling your website and areas of deficiency such as potentially obsolete or orphan pages.

There is also a filter option that lets you spot all the URLs that are likely affected by the issues of the few.

Additionally, the ‘Isues’ shows any issues affecting your domain with full error details based on log file data.

These are essentially:

Error Pages

This section provides an overview of all URLs found in the log file during crawling, but which return a 4XX or 5XX server response code.

These are the URLs that can cause huge problems to the website and need urgent repair.

Non-Indexable Pages

This section highlights URLs that are crawlable by search engines but are set to noindex and contain a canonical reference to an alternate URL or are outright blocked in robots.txt for example.

This report displays URLs considering the number of URLs accessed in log files.

In reality, not all of the URLs here are necessarily bad, but it’s a great way to highlight the actual crawl budget allocated to specific pages, allowing you to do a fair split if needed.

Unauthorized pages

When you block certain URLs of your site from being indexed in a robots.txt file, this does not mean that there will be no requests in the log file.

This section allows you to identify the URLs of your website that can be indexed despite being blocked in a robots.txt file.

It also allows you to see what elements are missing from certain pages so that they are not indexed by search engine crawlers as you would have liked, for example pages without thenoindex attribute.

Indexable Pages

Here you can see all the URLs on your website that can easily be indexed by search engines.

What’s special about this feature is that it also shows you a list of URLs that didn’t generate any search engine queries in the provided log files.

In most cases, these are orphan pages, that is to say those which have few links with the other pages of the website.

Desktop Pages and Mobile Alternatives

These two reports let you see if your pages are displaying correctly on the devices you set them up for.

This view is particularly useful for sites using a standalone mobile version or for responsive and adaptive sites. It’s also a better way to see less requested, probably old or irrelevant URLs.

About Deep Crawl

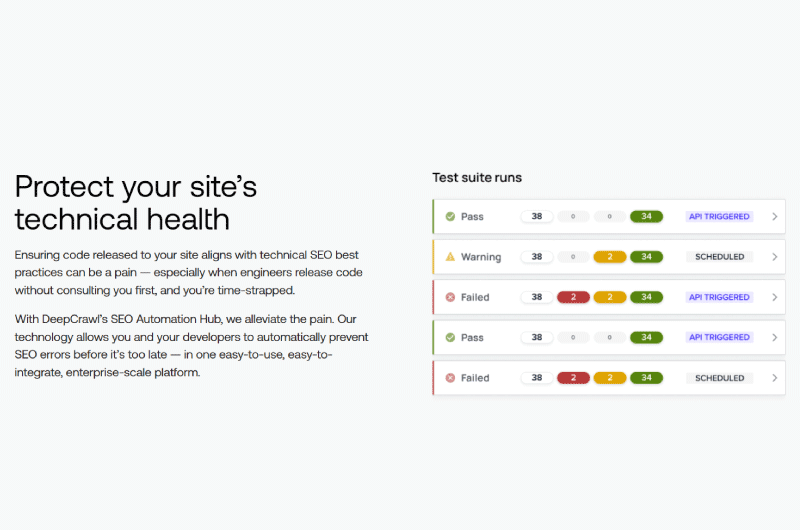

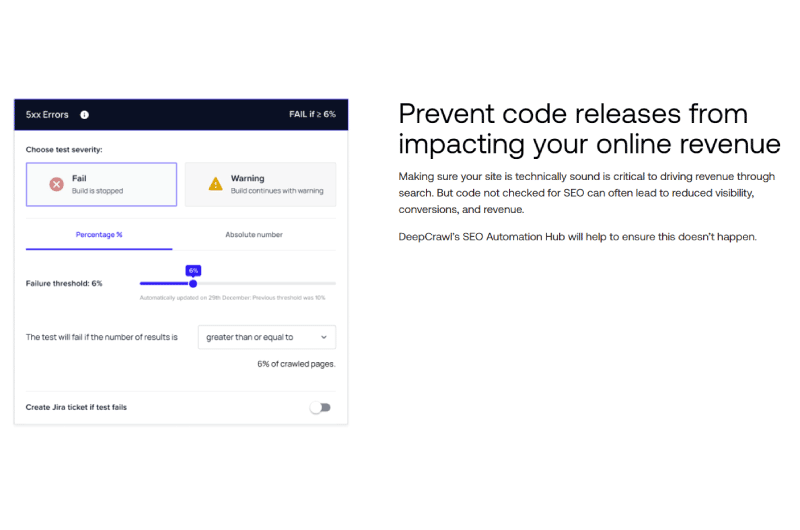

Deep Crawl is a technical SEO platform that helps detect growth opportunities for sites.

It thus helps major brands and companies to exploit their full revenue potential, while protecting their sites against certain code errors.

Deep Crawl appears to be a command center for technical SEO and website health. The platform uses a fairly powerful crawler that analyzes site data.

Apart from technical SEO, Deep Crawl also performs automation operations in order to protect sites from threats.

Deep Crawl has a team of digital professionals led by Craig Durham.

It is used by many companies, including globally recognized brands such as Adobe, eBay, Microsoft, Twitch, etc.